how it works

How Frenchie handles 30-minute audio without blocking your agent

Sync transcription is a great way to freeze an agent conversation for five minutes. Async job handling is the small engineering detail that makes transcription actually usable inside a live agent workflow.

Sync APIs feel right at first. You call a function, you wait, you get a result. Simple to code, simple to reason about.

Then you try to transcribe a 30-minute meeting recording inside an agent conversation.

The model submits the job. The HTTP connection opens. Five minutes pass while the audio gets chunked, transcribed, and merged. The agent sits frozen, the user sits frozen, the terminal sits frozen. At minute six someone hits Ctrl-C out of frustration. You just burned the agent context on a task that was never going to work synchronously.

This is why Frenchie's transcription pipeline is async by default.

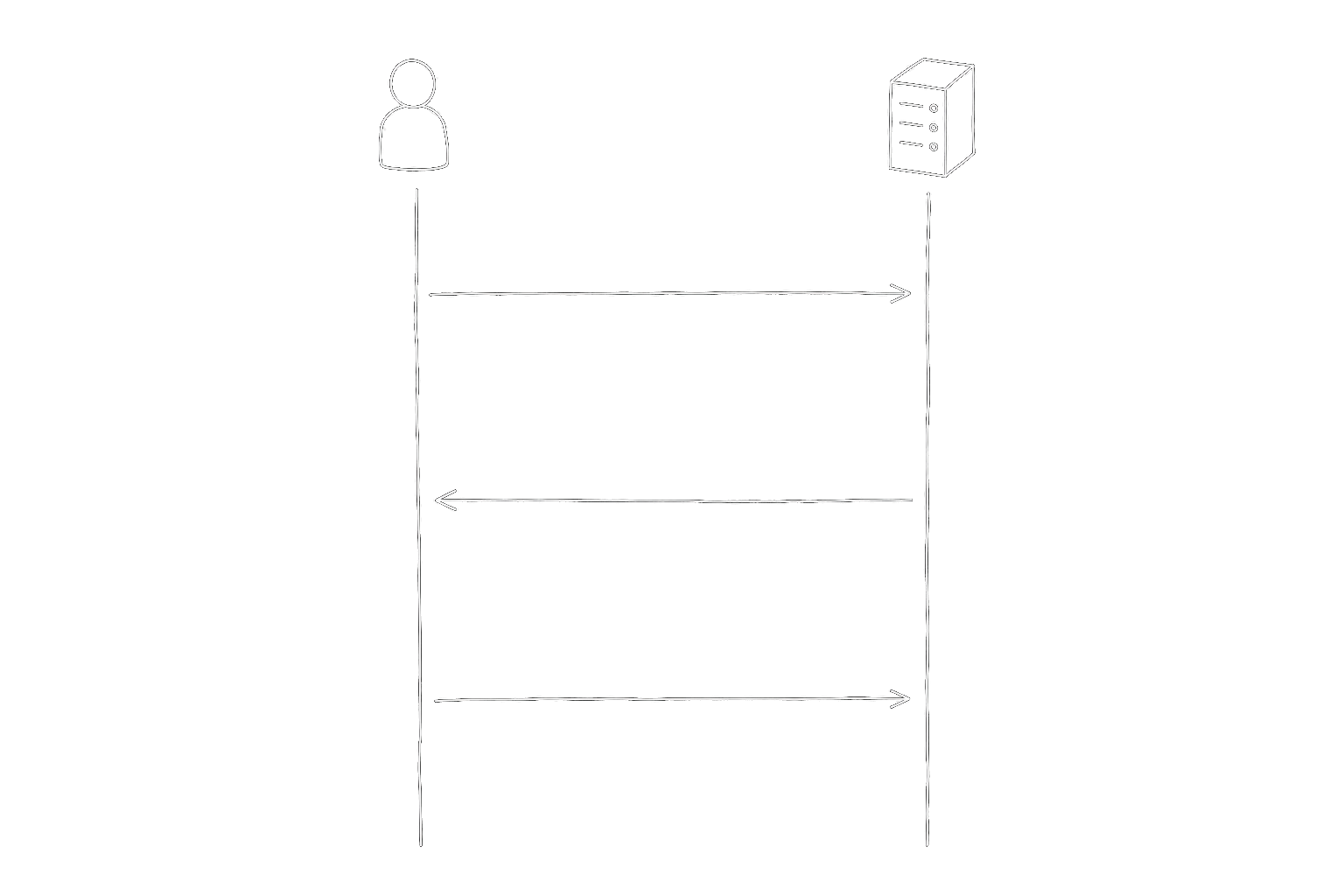

What "async" actually means in practice

When your agent calls transcribe_to_markdown with a file path, three things happen in sequence, fast:

- Frenchie accepts the file (or reads it from disk), validates it, and estimates credits.

- Frenchie creates a job, queues it, and returns a job ID to your agent in under a second.

- Your agent gets control back. The conversation continues.

The actual transcription runs in the background. Long audio gets chunked automatically — a 30-minute file splits into segments that process in parallel, then merge back into a single Markdown transcript. Your agent can poll for the result when it's ready, or the stdio transport can deliver the result via a second tool call (get_job_result) once the job completes.

For a 30-minute recording, the total wall-clock time is usually 2-4 minutes. But your agent doesn't sit blocked for 2-4 minutes. It does other work — drafts the meeting summary prompt, looks up attendees in your CRM, pulls last week's decisions from the transcript archive — and collects the Markdown when it lands.

The concrete flow

Here's what a realistic invocation looks like from the agent's side:

> /transcribe ./meetings/2026-04-16-standup.mp3

Tool call: transcribe_to_markdown(file: "./meetings/2026-04-16-standup.mp3")

Response: { jobId: "fr_job_8821", status: "processing", etaSeconds: 180 }

> [agent continues with other work — drafts email, checks calendar]

> [2 minutes later, agent checks job status]

Tool call: get_job_result(jobId: "fr_job_8821")

Response: { status: "completed", savedTo: ".frenchie/2026-04-16-standup/result.md", wordCount: 4821 }

> [agent reads the Markdown file and proceeds]

The agent isn't waiting on transcription. It's doing its regular job while a different piece of infrastructure does the audio work. The experience, from the user's perspective, is "I dropped in a recording and my agent kept moving."

Why we didn't make it sync

We did experiment with sync early on. The simpler code path is attractive — no job IDs, no polling, no second tool call. For files under about five minutes, sync is fine. Everything else falls over.

The failure modes are ugly:

- Request timeouts. Most HTTP clients default to 30 or 60-second timeouts. Long sync jobs die partway through. The agent gets nothing and has to retry the whole thing.

- Context bloat. If a sync job succeeds, the full Markdown payload comes back as the tool response. A 30-minute transcription is easily 4,000 words. That's 5,000+ tokens dumped into the agent's context on a single tool call.

- Retries are destructive. If the sync call fails, retrying starts the whole transcription over. With async, a failed polling request is cheap — you just poll again.

- Parallelism is impossible. If sync blocks the whole tool call, your agent can't do anything else. You've effectively single-threaded the entire conversation on a background job.

Async fixes every one of those. The cost is that tool authors (us) have to build a job queue, retry logic, and a second tool call for result retrieval. One-time cost on our side, recurring benefit on every agent that uses Frenchie.

What this means for you

If you're building an agent workflow that touches transcription, design around async from the start. The right shape is "submit, keep working, pick up the result." Not "wait five minutes staring at a blinking cursor."

Frenchie's stdio MCP contract explicitly returns metadata (savedTo, wordCount, imageCount, creditsUsed) rather than the full transcript in the tool response. Your agent reads the Markdown file if and when it needs the content. That keeps the tool-call response small and the context clean.

This is also why our max file size is 2 GB, not 200 MB. At 2 GB you can hand us an entire conference day's recording. You'd hate the wall-clock time on a sync API — you don't even notice it on an async one.

The small-engineering bet

Async job handling is the kind of engineering detail that doesn't show up in a demo. The demo looks identical — user drops a file, Markdown comes back. But the system behavior under real use is completely different. Real use means long files, slow networks, agent conversations that can't afford to block, and workflows where throughput matters more than latency.

We bet that getting this one engineering detail right would make Frenchie feel qualitatively better than a simpler tool. Six months in, we think that bet was correct. If you've been around other transcription APIs that lock up your workflow on long files, give Frenchie a try with 100 free credits — the difference is noticeable on the second or third long file, not the first.

The same shape for OCR and image generation

Transcription is the most obvious case for async — a 30-minute recording forces the issue. But the same queue-and-retrieve pattern shows up wherever your agent does non-trivial work:

- OCR uses the same async pipeline for large PDFs. A 500-page scan chunks out to

ocr_batchjobs, merges through the sameMERGEqueue, and hands your agent a single Markdown result when it's ready. - Image generation submits each

generate_imagecall through the same BullMQ queue with the same job-id retrieval path — so your agent fires a prompt, keeps drafting, and collects the image file when it lands.

Three capabilities, one async contract. If you understood transcribe_to_markdown above, you already understand how ocr_to_markdown and generate_image behave on long jobs — only the inputs change.